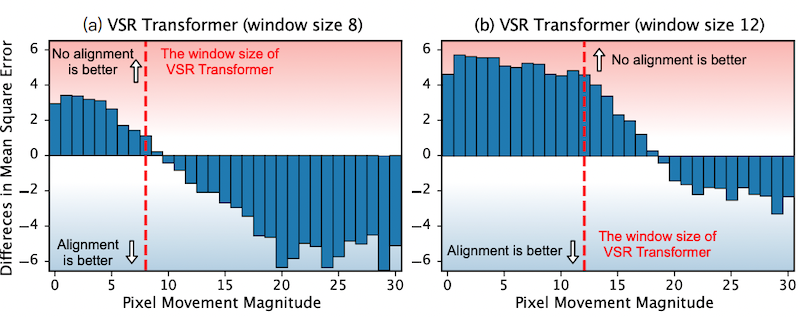

Our experiments show that: (i) VSR Transformers can directly utilize multi-frame information from unaligned videos, and (ii) existing alignment methods are sometimes harmful to VSR Transformers. we propose a new and efficient alignment method called patch alignment, which aligns image patches instead of pixels. VSR Transformers equipped with patch alignment could demonstrate SoTA performance.

Rethinking Alignment in Video Super-Resolution Transformers

Neural Information Processing Systems (NeurIPS), 2022

Shuwei Shi*, Jinjin Gu*, Liangbin Xie, Xintao Wang*, Yujiu Yang, Chao Dong

Discovering "Semantics" in Super-Resolution Networks

arXiv, 2021

Yihao Liu*, Anran Liu*, Jinjin Gu, Zhipeng Zhang, Wenhao Wu, Yu Qiao, Chao Dong

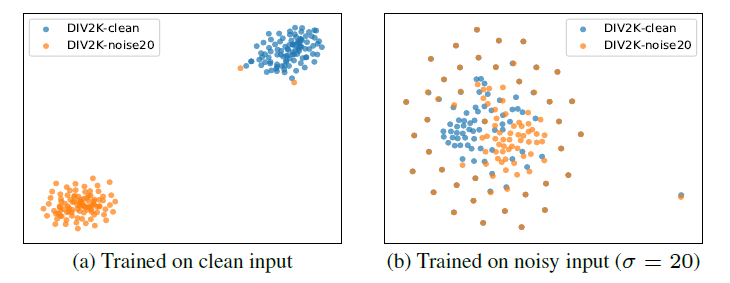

Can we find any “semantics” in SR networks? In this paper, by analyzing the feature representations with dimensionality reduction and visualization, we successfully discover the deep semantic representations in SR networks, i.e., deep degradation representations (DDR), which relate to the image degradation types and degrees.

Finding Discriminative Filters for Specific Degradations in Blind Super-Resolution

Neural Information Processing Systems (NeurIPS), Spotlight, 2021

Liangbin Xie*, Xintao Wang*, Zhongang Qi, Chao Dong, Ying Shan

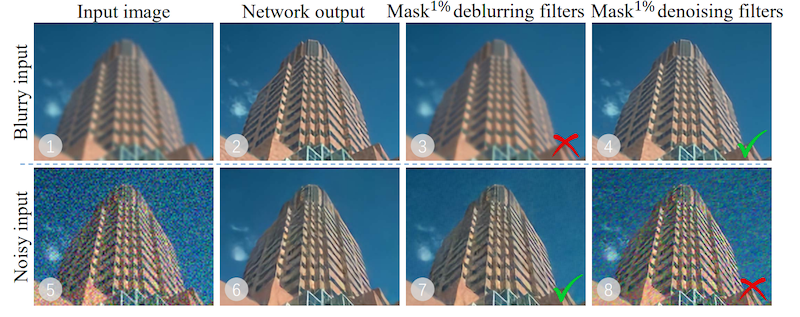

Recent blind super-resolution methods typically consist of two branches, one for degradation prediction and the other for conditional restoration. Our experiments show that a one-branch network can achieve comparable performance to the two-branch scheme. How can one-branch networks automatically learn to distinguish degradations? We propose a new diagnostic tool – Filter Attribution method based on Integral Gradient (FAIG), which aims at finding the most discriminative filters instead of input pixels/features for degradation removal in blind SR networks. Our findings can not only help us better understand network behaviors inside one-branch blind SR networks, but also provide guidance on designing more efficient architectures and diagnosing networks for blind SR.

Interpreting Super-Resolution Networks with Local Attribution Maps

Computer Vision and Pattern Recognition (CVPR), 2021

Jinjin Gu, Chao Dong

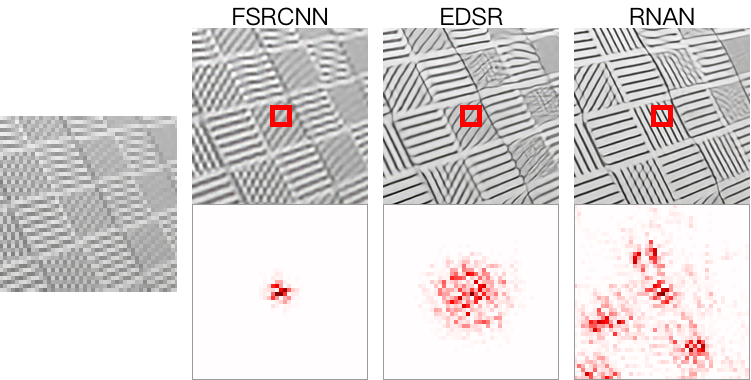

SR networks are mysterious and little works make attempt to understand them. In this work, we perform attribution analysis of SR networks, which aims at finding the input pixels that strongly influence the SR results. We propose a novel attribution approach called local attribution map (LAM) to interpret SR networks. Our work opens new directions for designing SR networks and interpreting low-level vision deep models.